So many people are worried about the advent of Artificial Intelligences becoming more powerful than humans.

At one end of the spectrum are those that watch Hollywood science fiction blockbusters depicting super intelligent machines deciding to destroy the human virus. At the other end are those that closely follow complex scientific advancements which seem to all but guarantee the eventual end of the human species.

In the middle are calm speaking, well regarded futurists with excellent track records of accurately predicting the future. The most notable of which is Ray Kurtzweil. In his 2009 compelling documentary “Transcendent Man” he explains that technology’s exponential growth will consume humanity far sooner than most think.

The most promoted advancement in Artificial Intelligence in the last 20 years is something called Deep Learning, which has lead to:

IBM’s Deep Blue beating the worlds best chess player Garry Kasparov in 1997

IBM’s Deep Blue beating the worlds best chess player Garry Kasparov in 1997- IBM’s Watson beating the best of Jeopardy contestants in 2011

- Google’s AlphaGo beating the worlds best at the incredibly complex Korean board game “Go” in 2016

The Deep Learning technology that drove these computers to their much publicized wins over humanity have only been advanced since. As our sister site, www.URTech.ca‘s slogan reads “technology doesn’t stop”.

So are us lowly humans right to be worried? I know at various points in the last few decades, I have been quite concerned.

Lets examine this most high profile of all the AI technologies a bit further to investigate this question.

At it’s core, Deep Learning is based on a very simple notion that a computer can develop it’s own pattern recognition to answer a question.

For example. While any school age child can easily recognize all of these as the letter “A”, computers were completely incapable of doing it accurately until the late 1990’s. What changed?

For example. While any school age child can easily recognize all of these as the letter “A”, computers were completely incapable of doing it accurately until the late 1990’s. What changed?

After 50 years of serious work on AI, computers that “recognize” alphanumeric characters could only do so if a near exact font match had been manually fed into them and tedious tuning of variables like color, size and italics were programmed. That all changed with the advent of Deep Learning in the 1990’s.

Deep Learning algorithms allow computers to calculate their own variables by feeding them truly massive quantities of examples. That is why today, internet data has become so valuable. Deep Learning computers now have access to tens if not hundreds of millions of examples of… well everything. From that exposure they can “learn”.

Here is the problem. Because these algorithms allow computers to calculate their own variables, they are what tech and science people call a “black box”. We really don’t know what or how they are doing what they are doing. What we can say is that they are taking existing data and extrapolating it to guess at the right answer.

I recently read an excellent article by Judea Pearl who put it this way:

“… (Deep Learning) works well, and we don’t know why… Once you unleash it on large data, Deep Learning has its own dynamics, it does its own repair and its own optimization and it gives you the right results most of the time but when it doesn’t, you don’t have a clue about what went wrong and what should be fixed.” Source; Page 16

What all of us humans are worried about isn’t computers being able to find the best chess move or determine if that letter is actually an “A” or not, what we’re worried about is computers actually reasoning “What If” scenarios that go far beyond the data sets that they have analyzed.

Knowing the next statistically best five chess moves has no value when you deal with real-world variables. What happens if the human player get scared and makes an otherwise irrational move? What if the board is expanded to include another row of checked squares? In these circumstances deep learning has no answers.

What humans are afraid of is what the scientific world calls “Strong AI”. Strong AI is not just pattern recognition with future prediction that comes from Deep Learning, it is the ability to synthesize diverse inputs.

Pearl says it this way:

“…such systems cannot reason about “what if” questions and therefore cannot serve as the basis for strong AI, that is artificial intelligence that emulates human level reasoning and competence. (These) learning machines need the guidance of a blueprint of reality, a model similar to a road map that guides us in driving through an unfamiliar city. Source: Page 17

While Deep Learning makes advances, and in places like Canada’s golden AI Triangle (Ottawa, Montreal Toronto (actually Guelph)), it will still max out and be completely incapable of dealing with otherwise extranious factors.

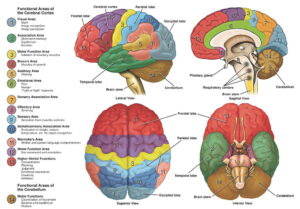

We see the real concern with deep learning taking over humanity by following the Darwinian development of the human brain. Our brains are not one contiguous chunk of matter. They are composed of many highly discrete regions.

We see the real concern with deep learning taking over humanity by following the Darwinian development of the human brain. Our brains are not one contiguous chunk of matter. They are composed of many highly discrete regions.

It is more than just silly conjecture to think that Deep Learning Artificial Intelligence is likely to become much like our human brain stem which controls only our most basic functions like breathing and sweating to cool ourselves off. Brain stems do not “reason”, they just quickly calculate and then control what needs to be done to exist.

So while deep learning deservedly makes the impressive headlines and movies we see today, it really is no immediate threat to humanity. What is a threat is what comes next and sits on top of a deep learning “brain stem”, like a digital “frontal lobe” or digtial “emotional area”.

If there is a computer algorithm that can rationalize and reason based on literally all of the available data ever collected like Deep Learning does, it could crush us all, but that day is no time soon.

1 Comment

VIDEO: Did Google Create a Sentient Artificial Intelligence? Blake Lemoine Interview – Partisan Issues · July 2, 2022 at 7:35 pm

[…] this wide ranging interview with Google AI Engineer Blake Lemoine the question of has Google created a Sentient Artificial Intelligence in its […]